Federated Linear Regression¶

Linear Regression(LinR) is a simple statistic model widely used for predicting continuous numbers. FATE provides Heterogeneous Linear Regression(HeteroLinR) and SSHE Linear Regression(HeteroSSHELinR).

Below lists features of HeteroLinR & HeteroSSHELinR models:

| Linear Model | Arbiter-less Training | Weighted Training | Multi-Host | Cross Validation | Warm-Start/CheckPoint |

|---|---|---|---|---|---|

| Hetero LinR | ✗ | ✓ | ✓ | ✓ | ✓ |

| Hetero SSHELinR | ✓ | ✓ | ✗ | ✓ | ✓ |

Heterogeneous LinR¶

HeteroLinR also supports multi-Host training. You can specify multiple hosts in the job configuration file like the provided examples/dsl/v2/hetero_linear_regression.

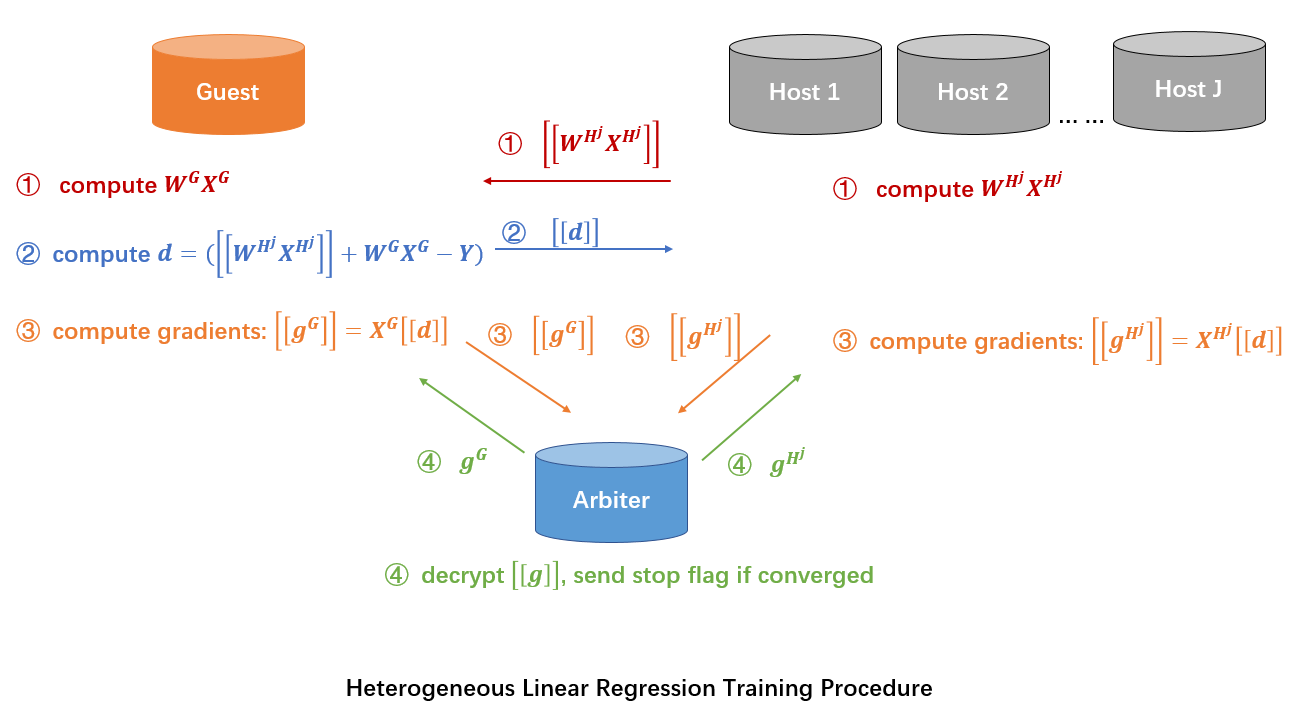

Here we simplify participants of the federation process into three parties. Party A represents Guest, party B represents Host. Party C, which is also known as “Arbiter,” is a third party that works as coordinator. Party C is responsible for generating private and public keys.

The process of HeteroLinR training is shown below:

A sample alignment process is conducted before training. The sample alignment process identifies overlapping samples in databases of all parties. The federated model is built based on the overlapping samples. The whole sample alignment process is conducted in encryption mode, and so confidential information (e.g. sample ids) will not be leaked.

In the training process, party A and party B each compute the elements needed for final gradients. Arbiter aggregates, calculates, and transfers back the final gradients to corresponding parties. For more details on the secure model-building process, please refer to this paper.

Param¶

linear_regression_param

¶

Classes¶

LinearParam(penalty='L2', tol=0.0001, alpha=1.0, optimizer='sgd', batch_size=-1, learning_rate=0.01, init_param=InitParam(), max_iter=20, early_stop='diff', encrypt_param=EncryptParam(), sqn_param=StochasticQuasiNewtonParam(), encrypted_mode_calculator_param=EncryptedModeCalculatorParam(), cv_param=CrossValidationParam(), decay=1, decay_sqrt=True, validation_freqs=None, early_stopping_rounds=None, stepwise_param=StepwiseParam(), metrics=None, use_first_metric_only=False, floating_point_precision=23, callback_param=CallbackParam())

¶

Bases: LinearModelParam

Parameters used for Linear Regression.

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

penalty |

Penalty method used in LinR. Please note that, when using encrypted version in HeteroLinR, 'L1' is not supported. When using Homo-LR, 'L1' is not supported |

'L2' or 'L1'

|

|

tol |

float, default

|

The tolerance of convergence |

0.0001

|

alpha |

float, default

|

Regularization strength coefficient. |

1.0

|

optimizer |

Optimize method |

'sgd'

|

|

batch_size |

int, default

|

Batch size when updating model. -1 means use all data in a batch. i.e. Not to use mini-batch strategy. |

-1

|

learning_rate |

float, default

|

Learning rate |

0.01

|

max_iter |

int, default

|

The maximum iteration for training. |

20

|

init_param |

Init param method object. |

InitParam()

|

|

early_stop |

Method used to judge convergence. a) diff: Use difference of loss between two iterations to judge whether converge. b) abs: Use the absolute value of loss to judge whether converge. i.e. if loss < tol, it is converged. c) weight_diff: Use difference between weights of two consecutive iterations |

'diff'

|

|

encrypt_param |

encrypt param |

EncryptParam()

|

|

encrypted_mode_calculator_param |

encrypted mode calculator param |

EncryptedModeCalculatorParam()

|

|

cv_param |

cv param |

CrossValidationParam()

|

|

decay |

Decay rate for learning rate. learning rate will follow the following decay schedule. lr = lr0/(1+decay*t) if decay_sqrt is False. If decay_sqrt is True, lr = lr0 / sqrt(1+decay*t) where t is the iter number. |

1

|

|

decay_sqrt |

lr = lr0/(1+decay*t) if decay_sqrt is False, otherwise, lr = lr0 / sqrt(1+decay*t) |

True

|

|

validation_freqs |

validation frequency during training, required when using early stopping. The default value is None, 1 is suggested. You can set it to a number larger than 1 in order to speed up training by skipping validation rounds. When it is larger than 1, a number which is divisible by "max_iter" is recommended, otherwise, you will miss the validation scores of the last training iteration. |

None

|

|

early_stopping_rounds |

If positive number specified, at every specified training rounds, program checks for early stopping criteria. Validation_freqs must also be set when using early stopping. |

None

|

|

metrics |

Specify which metrics to be used when performing evaluation during training process. If metrics have not improved at early_stopping rounds, trianing stops before convergence. If set as empty, default metrics will be used. For regression tasks, default metrics are ['root_mean_squared_error', 'mean_absolute_error'] |

None

|

|

use_first_metric_only |

Indicate whether to use the first metric in |

False

|

|

floating_point_precision |

if not None, use floating_point_precision-bit to speed up calculation, e.g.: convert an x to round(x * 2**floating_point_precision) during Paillier operation, divide the result by 2**floating_point_precision in the end. |

23

|

|

callback_param |

callback param |

CallbackParam()

|

Source code in python/federatedml/param/linear_regression_param.py

94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 | |

Attributes¶

sqn_param = copy.deepcopy(sqn_param)

instance-attribute

¶encrypted_mode_calculator_param = copy.deepcopy(encrypted_mode_calculator_param)

instance-attribute

¶Functions¶

check()

¶Source code in python/federatedml/param/linear_regression_param.py

116 117 118 119 120 121 122 123 124 125 126 127 | |

Features¶

-

L1 & L2 regularization

-

Mini-batch mechanism

-

Five optimization methods:

-

sgd

gradient descent with arbitrary batch size -

rmsprop

RMSProp -

adam

Adam -

adagrad

AdaGrad -

nesterov_momentum_sgd

Nesterov Momentum -

stochastic quansi-newton

The algorithm details can refer to this paper.

-

-

Three converge criteria:

-

diff

Use difference of loss between two iterations, not available for multi-host training -

abs

Use the absolute value of loss -

weight_diff

Use difference of model weights

-

-

Support multi-host modeling task. For details on how to configure for multi-host modeling task, please refer to this guide

-

Support validation for every arbitrary iterations

-

Learning rate decay mechanism

-

Support early stopping mechanism, which checks for performance change on specified metrics over training rounds. Early stopping is triggered when no improvement is found at early stopping rounds.

-

Support sparse format data as input.

-

Support stepwise. For details on stepwise mode, please refer to stepwise .

Hetero-SSHE-LinR features:¶

-

L1 & L2 regularization

-

Mini-batch mechanism

-

Support different encrypt-mode to balance speed and security

-

Support early-stopping mechanism

-

Support setting arbitrary metrics for validation during training

-

Support model encryption with host model