Hetero Feature Binning¶

Feature binning or data binning is a data pre-processing technique. It can be use to reduce the effects of minor observation errors, calculate information values and so on.

Currently, we provide quantile binning and bucket binning methods. To achieve quantile binning approach, we have used a special data structure mentioned in this paper. Feel free to check out the detail algorithm in the paper.

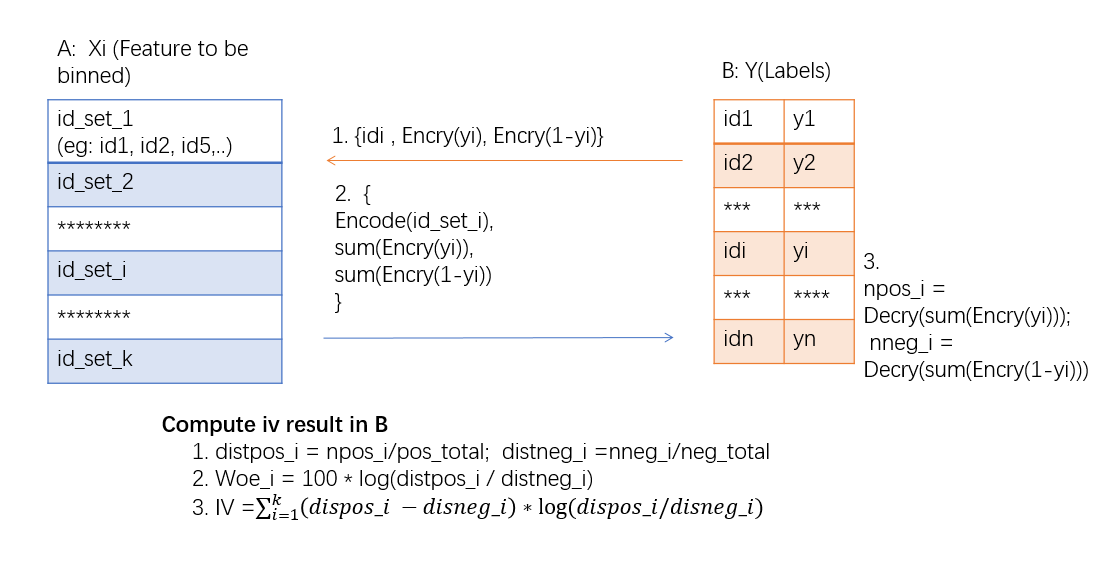

As for calculating the federated iv and woe values, the following figure can describe the principle properly.

As the figure shows, B party which has the data labels encrypt its labels with Addiction homomorphic encryption and then send to A. A static each bin's label sum and send back. Then B can calculate woe and iv base on the given information.

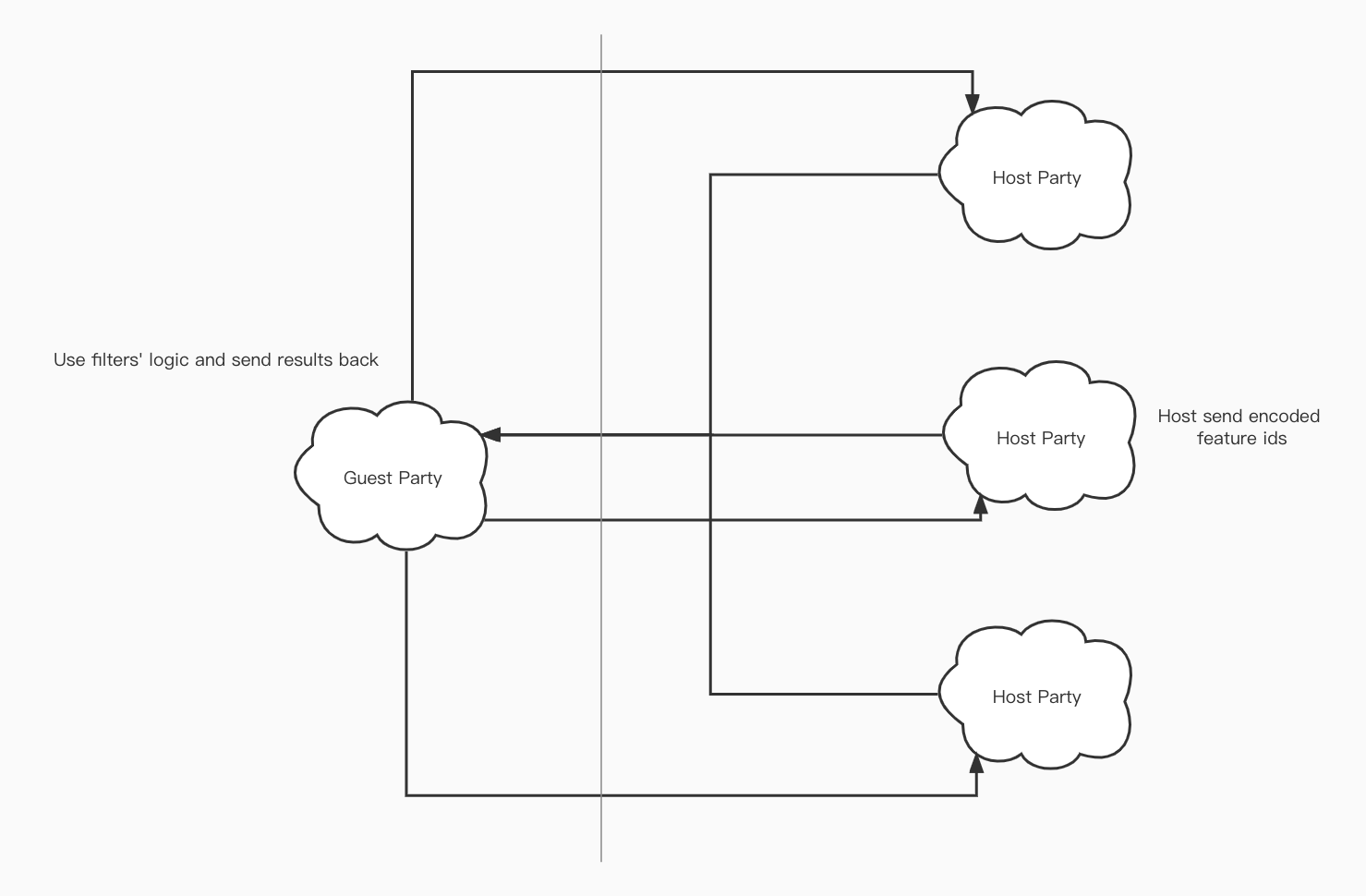

For multiple hosts, it is similar with one host case. Guest sends its encrypted label information to all hosts, and each of the hosts calculates and sends back the static info.

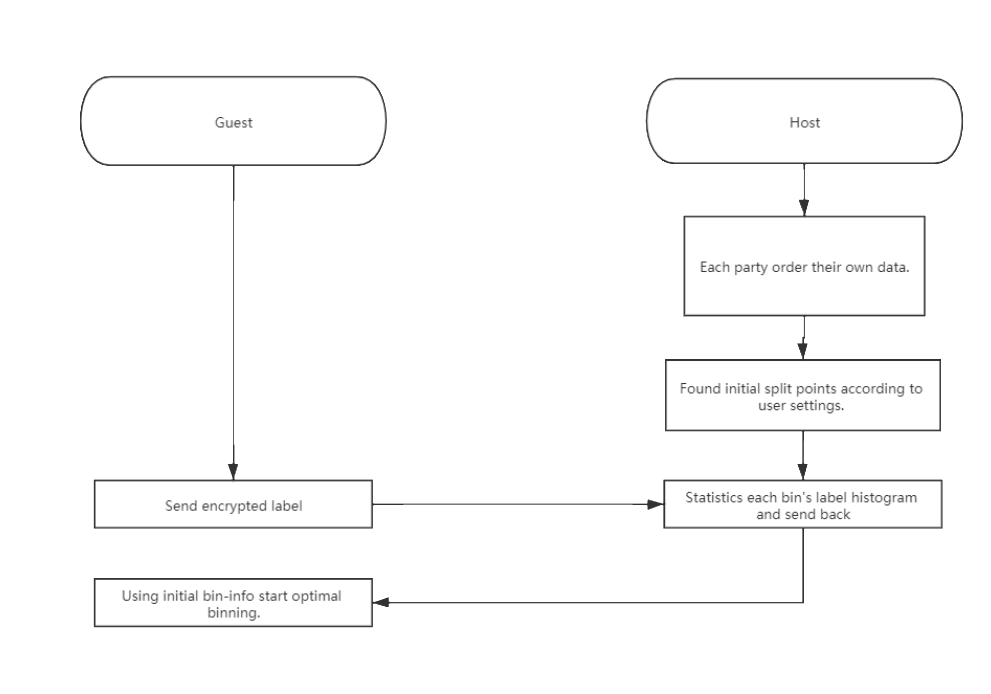

For optimal binning, each party use quantile binning or bucket binning find initial split points. Then Guest will send encrypted labels to Host. Host use them calculate histogram of each bin and send back to Guest. Then start optimal binning methods.

There exist two kinds of methods, merge-optimal binning and split-optimal binning. When choosing metrics as iv, gini or chi-square, merge type optimal binning will be used. On the other hand, if ks chosen, split type optimal binning will be used.

Below lists all metrics of optimal binning:

| Optimal Binning Metric Type | Input Data Case |

|---|---|

| chi-square | dense input sparse input |

| gini | dense input sparse input |

| iv | dense input sparse input |

| ks | dense input sparse input |

Binning module supports multi-class data to calculate iv and woe too. To achieve it, one-vs-rest mechanism is used. Each label will be chosen iteratively as event case. All other cases will be treated as non-event cases. Therefore, we can obtain a set of iv\&woe result for each label case.

Features¶

- Support Quantile Binning based on quantile summary algorithm.

- Support Bucket Binning.

- Support missing value input by ignoring them.

- Support sparse data format generated by dataio component.

- Support calculating woe and iv as well as counting positive and negative cases for each bin.

- Support transforming data into bin indexes or woe value.

- Support multiple-host binning.

- Support 4 types of optimal binning.

- Support asymmetric binning methods on Host & Guest sides.

- Support multi-class iv\&woe calculation.

Below lists supported features:

| Cases | Scenario |

|---|---|

| Input Data with Missing Value | bucket binning quantile binning |

| Input Data with Categorical Features | bucket binning quantile binning optimal binning |

| Input Data in Sparse Format | bucket binning quantile binning optimal binning |

| Input Data with Multi-Class(label) | single host multi-host |

| Output Data Transformed | bin index woe value(guest-only) |

| Skip Statistic Calculation | bucket binning quantile binning |

Param¶

feature_binning_param

¶

Attributes¶

Classes¶

TransformParam(transform_cols=-1, transform_names=None, transform_type='bin_num')

¶

Bases: BaseParam

Define how to transfer the cols

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

transform_cols |

list of column index, default

|

Specify which columns need to be transform. If column index is None, None of columns will be transformed.

If it is -1, it will use same columns as cols in binning module.

Note tha columns specified by |

-1

|

transform_names |

Specify which columns need to calculated. Each element in the list represent for a column name in header.

Note tha columns specified by |

None

|

|

transform_type |

Specify which value these columns going to replace. 1. bin_num: Transfer original feature value to bin index in which this value belongs to. 2. woe: This is valid for guest party only. It will replace original value to its woe value 3. None: nothing will be replaced. |

'bin_num'

|

Source code in python/federatedml/param/feature_binning_param.py

45 46 47 48 49 | |

Attributes¶

transform_cols = transform_cols

instance-attribute

¶transform_names = transform_names

instance-attribute

¶transform_type = transform_type

instance-attribute

¶Functions¶

check()

¶Source code in python/federatedml/param/feature_binning_param.py

51 52 53 54 55 56 57 58 59 60 | |

OptimalBinningParam(metric_method='iv', min_bin_pct=0.05, max_bin_pct=1.0, init_bin_nums=1000, mixture=True, init_bucket_method='quantile')

¶

Bases: BaseParam

Indicate optimal binning params

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

metric_method |

The algorithm metric method. Support iv, gini, ks, chi-square |

'iv'

|

|

min_bin_pct |

The minimum percentage of each bucket |

0.05

|

|

max_bin_pct |

The maximum percentage of each bucket |

1.0

|

|

init_bin_nums |

Number of bins when initialize |

1000

|

|

mixture |

Whether each bucket need event and non-event records |

True

|

|

init_bucket_method |

Init bucket methods. Accept quantile and bucket. |

'quantile'

|

Source code in python/federatedml/param/feature_binning_param.py

84 85 86 87 88 89 90 91 92 93 94 | |

Attributes¶

init_bucket_method = init_bucket_method

instance-attribute

¶metric_method = metric_method

instance-attribute

¶max_bin = None

instance-attribute

¶mixture = mixture

instance-attribute

¶max_bin_pct = max_bin_pct

instance-attribute

¶min_bin_pct = min_bin_pct

instance-attribute

¶init_bin_nums = init_bin_nums

instance-attribute

¶adjustment_factor = None

instance-attribute

¶Functions¶

check()

¶Source code in python/federatedml/param/feature_binning_param.py

96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 | |

FeatureBinningParam(method=consts.QUANTILE, compress_thres=consts.DEFAULT_COMPRESS_THRESHOLD, head_size=consts.DEFAULT_HEAD_SIZE, error=consts.DEFAULT_RELATIVE_ERROR, bin_num=consts.G_BIN_NUM, bin_indexes=-1, bin_names=None, adjustment_factor=0.5, transform_param=TransformParam(), local_only=False, category_indexes=None, category_names=None, need_run=True, skip_static=False)

¶

Bases: BaseParam

Define the feature binning method

Parameters:

| Name | Type | Description | Default |

|---|---|---|---|

method |

str, quantile

|

Binning method. |

consts.QUANTILE

|

compress_thres |

When the number of saved summaries exceed this threshold, it will call its compress function |

consts.DEFAULT_COMPRESS_THRESHOLD

|

|

head_size |

The buffer size to store inserted observations. When head list reach this buffer size, the QuantileSummaries object start to generate summary(or stats) and insert into its sampled list. |

consts.DEFAULT_HEAD_SIZE

|

|

error |

The error of tolerance of binning. The final split point comes from original data, and the rank of this value is close to the exact rank. More precisely, floor((p - 2 * error) * N) <= rank(x) <= ceil((p + 2 * error) * N) where p is the quantile in float, and N is total number of data. |

consts.DEFAULT_RELATIVE_ERROR

|

|

bin_num |

The max bin number for binning |

consts.G_BIN_NUM

|

|

bin_indexes |

list of int or int, default

|

Specify which columns need to be binned. -1 represent for all columns. If you need to indicate specific

cols, provide a list of header index instead of -1.

Note tha columns specified by |

-1

|

bin_names |

list of string, default

|

Specify which columns need to calculated. Each element in the list represent for a column name in header.

Note tha columns specified by |

None

|

adjustment_factor |

float, default

|

the adjustment factor when calculating WOE. This is useful when there is no event or non-event in a bin. Please note that this parameter will NOT take effect for setting in host. |

0.5

|

category_indexes |

list of int or int, default

|

Specify which columns are category features. -1 represent for all columns. List of int indicate a set of

such features. For category features, bin_obj will take its original values as split_points and treat them

as have been binned. If this is not what you expect, please do NOT put it into this parameters.

The number of categories should not exceed bin_num set above.

Note tha columns specified by |

None

|

category_names |

list of string, default

|

Use column names to specify category features. Each element in the list represent for a column name in header.

Note tha columns specified by |

None

|

local_only |

bool, default

|

Whether just provide binning method to guest party. If true, host party will do nothing. Warnings: This parameter will be deprecated in future version. |

False

|

transform_param |

Define how to transfer the binned data. |

TransformParam()

|

|

need_run |

Indicate if this module needed to be run |

True

|

|

skip_static |

If true, binning will not calculate iv, woe etc. In this case, optimal-binning will not be supported. |

False

|

Source code in python/federatedml/param/feature_binning_param.py

171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 | |

Attributes¶

method = method

instance-attribute

¶compress_thres = compress_thres

instance-attribute

¶head_size = head_size

instance-attribute

¶error = error

instance-attribute

¶adjustment_factor = adjustment_factor

instance-attribute

¶bin_num = bin_num

instance-attribute

¶bin_indexes = bin_indexes

instance-attribute

¶bin_names = bin_names

instance-attribute

¶category_indexes = category_indexes

instance-attribute

¶category_names = category_names

instance-attribute

¶transform_param = copy.deepcopy(transform_param)

instance-attribute

¶need_run = need_run

instance-attribute

¶skip_static = skip_static

instance-attribute

¶local_only = local_only

instance-attribute

¶Functions¶

check()

¶Source code in python/federatedml/param/feature_binning_param.py

196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 | |

HeteroFeatureBinningParam(method=consts.QUANTILE, compress_thres=consts.DEFAULT_COMPRESS_THRESHOLD, head_size=consts.DEFAULT_HEAD_SIZE, error=consts.DEFAULT_RELATIVE_ERROR, bin_num=consts.G_BIN_NUM, bin_indexes=-1, bin_names=None, adjustment_factor=0.5, transform_param=TransformParam(), optimal_binning_param=OptimalBinningParam(), local_only=False, category_indexes=None, category_names=None, encrypt_param=EncryptParam(), need_run=True, skip_static=False, split_points_by_index=None, split_points_by_col_name=None)

¶

Bases: FeatureBinningParam

split_points_by_col_name: dict, default None

Manually specified split points for local features;

key should be feature name, value should be split points in sorted list;

along with split_points_by_index, keys should cover all local features, including categorical features;

note that each split point list should have length equal to desired bin num(n),

with first (n-1) entries equal to the maximum value(inclusive) of each first (n-1) bins,

and nth value the max of current feature.

Source code in python/federatedml/param/feature_binning_param.py

232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 | |

Attributes¶

optimal_binning_param = copy.deepcopy(optimal_binning_param)

instance-attribute

¶encrypt_param = encrypt_param

instance-attribute

¶split_points_by_index = split_points_by_index

instance-attribute

¶split_points_by_col_name = split_points_by_col_name

instance-attribute

¶Functions¶

check()

¶Source code in python/federatedml/param/feature_binning_param.py

255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 | |

HomoFeatureBinningParam(method=consts.VIRTUAL_SUMMARY, compress_thres=consts.DEFAULT_COMPRESS_THRESHOLD, head_size=consts.DEFAULT_HEAD_SIZE, error=consts.DEFAULT_RELATIVE_ERROR, sample_bins=100, bin_num=consts.G_BIN_NUM, bin_indexes=-1, bin_names=None, adjustment_factor=0.5, transform_param=TransformParam(), category_indexes=None, category_names=None, need_run=True, skip_static=False, max_iter=100)

¶

Bases: FeatureBinningParam

Source code in python/federatedml/param/feature_binning_param.py

294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 | |

Attributes¶

sample_bins = sample_bins

instance-attribute

¶max_iter = max_iter

instance-attribute

¶Functions¶

check()

¶Source code in python/federatedml/param/feature_binning_param.py

314 315 316 317 318 319 320 321 322 | |